IEEE 2017: Two-Factor Data Access Control With Efficient Revocation for Multi-Authority Cloud Storage Systems

Abstract: Attribute-based encryption, especially for ciphertext-policy attribute-based encryption, can ful_ll the functionality of _ne-grained access control in cloud storage systems. Since users' attributes may be issued by multiple attribute authorities, multi-authority ciphertext-policy attribute-based encryption is an

emerging cryptographic primitive for enforcing attribute-based access control on outsourced data. However, most of the existing multi-authority attribute-based systems are either insecure in attribute-level revocation or lack of ef_ciency in communication overhead and computation cost. In this paper, we propose an attribute-based access control scheme with two-factor protection for multi-authority cloud storage systems. In our proposed scheme, any user can recover the outsourced data if and only if this user holds suf_cient attribute secret keys with respect to the access policy and authorization key in regard to the outsourced data. In addition, the proposed scheme enjoys the properties of constant-size ciphertext and small computation cost. Besides supporting the attribute-level revocation, our proposed scheme allows data owner to carry out the user-level revocation. The security analysis, performance comparisons, and experimental results indicatethat our proposed scheme is not only secure but also practical.

IEEE 2017: Optimizing Cloud-Service Performance: Efficient Resource Provisioning via Optimal Workload Allocation

Abstract: Abstract—Cloud computing is being widely accepted and utilized in the business world. From the perspective of businesses utilizing the cloud, it is critical to meet their customers’ requirements by achieving service-level-objectives. Hence, the ability to accurately characterize and optimize cloud-service performance is of great importance. In this paper a stochastic multi-tenant framework is proposed to model the service of customer requests in a cloud infrastructure composed of heterogeneous virtual machines. Two cloudservice performance metrics are mathematically characterized, namely the percentile and the mean of the stochastic response time of a customer request, in closed form. Based upon the proposed multi-tenant framework, a workload allocation algorithm, termed maxmin- cloud algorithm, is then devised to optimize the performance of the cloud service. A rigorous optimality proof of the max-min-cloud algorithm is also given. Furthermore, the resource-provisioning problem in the cloud is also studied in light of the max-min-cloud algorithm. In particular, an efficient resource-provisioning strategy is proposed for serving dynamically arriving customer requests. These findings can be used by businesses to build a better understanding of how much virtual resource in the cloud they may need to

meet customers’ expectations subject to cost constraints

IEEE 2017: Live Data Analytics With Collaborative Edge and Cloud Processing in Wireless IoT Networks

Abstract: Recently, big data analytics has received important attention in a variety of application domains including business, finance, space science, healthcare, telecommunication and Internet of Things (IoT). Among these areas, IoT is considered as an important platform in bringing people, processes, data and things/objects together in order to enhance the quality of our everyday lives. However, the key challenges are how to effectively extract useful features from the massive amount of heterogeneous data generated by resource-constrained IoT devices in order to provide real-time information and feedback to the endusers, and how to utilize this data-aware intelligence in enhancing the performance of wireless IoT networks. Although there are parallel advances in cloud computing and edge computing for addressing some issues in data analytics, they have their own benefits and limitations. The convergence of these two computing paradigms, i.e., massive virtually shared pool of computing and storage resources from the cloud and real time data processing by edge computing, could effectively enable live data analytics in wireless IoT networks. In this regard, we propose a novel framework for coordinated processing between edge and cloud computing/processing by integrating advantages from both the platforms. The proposed framework can exploit the network-wide knowledge and historical information available at the cloud center to guide edge computing units towards satisfying various performance requirements of heterogeneous wireless IoT networks. Starting with the main features, key enablers and the challenges of big data analytics, we provide various synergies and distinctions between cloud and edge processing. More importantly, we identify and describe the potential key enablers for the proposed edge-cloud collaborative framework, the associated key challenges and some interesting future research directions.

IEEE 2017: Optimizing Green Energy, Cost, and Availability in Distributed Data Centers

Abstract: Integrating renewable energy and ensuring high availability are among two major requirements for geo distributed data centers. Availability is ensured by provisioning spare capacity across the data centers to mask data center failures (either partial or complete). We propose a mixed integer linear programming formulation for capacity planning while minimizing the total cost of ownership (TCO) for highly available, green, distributed data centers. We minimize the cost due to power consumption and server deployment, while targeting a minimum usage of green energy. Solving our model shows that capacity provisioning considering green energy integration, not only lowers carbon footprint but also reduces the TCO. Results show that up to 40% green energy usage is feasible with marginal increase in the TCO compared to the other cost-aware models.

IEEE 2017: Cost Minimization Algorithms for Data Center Management

Abstract: Due to the increasing usage of cloud computing applications, it is important to minimize energy cost consumed by a data center, and simultaneously, to improve quality of service via data center management. One promising approach is to switch some servers in a data center to the idle mode for saving energy while to keep a suitable number of servers in the active mode for providing timely service. In this paper, we design both online and offline algorithms for this problem. For the offline algorithm, we formulate data center management as a cost minimization problem by considering energy cost, delay cost (to measure service quality), and switching cost (to change servers’ active/idle mode). Then, we analyze certain properties of an optimal solution which lead to a dynamic programming based algorithm. Moreover, by revising the solution procedure, we successfully eliminate the recursive procedure and achieve an optimal offline algorithm with a polynomial complexity.

IEEE 2017: Vehicular Cloud Data Collection for Intelligent Transportation Systems

Abstract: The Internet of Things (IoT) envisions to connect billions of sensors to the Internet, in order to provide new applications and services for smart cities. IoT will allow the evolution of the Internet of Vehicles (IoV) from existing Vehicular Ad hoc Networks (VANETs), in which the delivery of various services will be offered to drivers by integrating vehicles, sensors, and mobile devices into a global network. To serve VANET with computational resources, Vehicular Cloud Computing (VCC) is recently envisioned with the objective of providing traffic solutions to improve our daily driving. These solutions involve applications and services for the benefit of Intelligent Transportation Systems (ITS), which represent an important part of IoV. Data collection is an important aspect in ITS, which can effectively serve online travel systems with the aid of Vehicular Cloud (VC). In this paper, we involve the new paradigm of VCC to propose a data collection model for the benefit of ITS. We show via simulation results that the participation of low percentage of vehicles in a dynamic VC is sufficient to provide meaningful data collection.

IEEE 2017: RAAC: Robust and Auditable Access Control with Multiple Attribute Authorities for Public Cloud Storage

Abstract: Data access control is a challenging issue in public cloud storage systems. Ciphertext-Policy Attribute-Based En-cryption (CP-ABE) has been adopted as a promising technique to provide flexible, fine-grained and secure data access control for cloud storage with honest-but-curious cloud servers. However, in the existing CP-ABE schemes, the single attribute authority must execute the time-consuming user legitimacy verification and secret key distribution, and hence it results in a single-point performance bottleneck when a CP-ABE scheme is adopted in a large-scale cloud storage system. Users may be stuck in the waiting queue for a long period to obtain their secret keys, thereby resulting in low-efficiency of the system. Although multi-authority access control schemes have been proposed, these schemes still cannot overcome the drawbacks of single-point bottleneck and low efficiency, due to the fact that each of the authorities still independently manages a disjoint attribute set.

IEEE 2017: Privacy-Preserving Data Encryption Strategy for Big Data in Mobile Cloud Computing

Abstract: Privacy has become a considerable issue when the applications of big data are dramatically growing in cloud computing. The benefits of the implementation for these emerging technologies have improved or changed service models and improve application performances in various perspectives. However, the remarkably growing volume of data sizes has also resulted in many challenges in practice. The execution time of the data encryption is one of the serious issues during the data processing and transmissions. Many current applications abandon data encryptions in order to reach an adoptive performance level companioning with privacy concerns. In this paper, we concentrate on privacy and propose a novel data encryption approach, which is called Dynamic Data Encryption Strategy (D2ES). Our proposed approach aims to selectively encrypt data and use privacy classification methods under timing constraints. This approach is designed to maximize the privacy protection scope by using a selective encryption strategy within the required execution time requirements.

IEEE 2017: Identity-Based Remote Data Integrity Checking With Perfect Data Privacy Preserving for Cloud Storage

Abstract: Remote data integrity checking (RDIC) enables a data storage server, says a cloud server, to prove to a verifier that it is actually storing a data owner’s data honestly. To date, a number of RDIC protocols have been proposed in the literature, but most of the constructions suffer from the issue of a complex key management, that is, they rely on the expensive public key infrastructure (PKI), which might hinder the deployment of RDIC in practice. In this paper, we propose a new construction of identity-based (ID-based) RDIC protocol by making use of key- homomorphic cryptographic primitive to reduce the system complexity and the cost for establishing and managing the public key authentication framework in PKI-based RDIC schemes. We formalize ID-based RDIC and its security model, including security against a malicious cloud server and zero knowledge privacy against a third party verifier. The proposed ID-based RDIC protocol leaks no information of the stored data to the verifier during the RDIC process. The new construction is proven secure against the malicious server in the generic group model and achieves zero knowledge privacy against a verifier. Extensive security analysis and implementation results demonstrate that the proposed protocol is provably secure and practical in the real-world applications

IEEE 2017: Identity-Based Data Outsourcing with Comprehensive Auditing in Clouds

Abstract: Cloud storage system provides facilitative file storage and sharing services for distributed clients. To address integrity, controllable outsourcing and origin auditing concerns on outsourced files, we propose an identity-based data outsourcing (IBDO) scheme equipped with desirable features advantageous over existing proposals in securing outsourced data. First, our IBDO scheme allows a user to authorize dedicated proxies to upload data to the cloud storage server on her behalf, e.g., a company may authorize some employees to upload files to the company’s cloud account in a controlled way. The proxies are identified and authorized with their recognizable identities, which eliminates complicated certificate management in usual secure distributed computing systems. Second, our IBDO scheme facilitates comprehensive auditing, i.e., our scheme not only permits regular integrity auditing as in existing schemes for securing outsourced data, but also allows to audit the information on data origin, type and consistence of outsourced files.

IEEE 2017: TAFC: Time and Attribute Factors Combined Access Control on Time-Sensitive Data in Public Cloud

Abstract: The new paradigm of outsourcing data to the cloud is a double-edged sword. On one side, it frees up data owners from the technical management, and is easier for the data owners to share their data with intended recipients when data are stored in the cloud. On the other side, it brings about new challenges about privacy and security protection. To protect data confidentiality against the honest-but-curious cloud service provider, numerous works have been proposed to support fine-grained data access control. However, till now, no efficient schemes can provide the scenario of fine-grained access control together with the capacity of time-sensitive data publishing. In this paper, by embedding the mechanism of timed-release encryption into CP-ABE (Ciphertext- Policy Attribute-based Encryption), we propose TAFC: a new time and attribute factors combined access control on time sensitive data stored in cloud. Extensive security and performance analysis shows that our proposed scheme is highly efficient and

satisfies the security requirements for time-sensitive data storage in public cloud.

IEEE 2017: Attribute-Based Storage Supporting Secure Deduplication of Encrypted Data in Cloud

Abstract: Attribute-based encryption (ABE) has been widely used in cloud computing where a data provider outsources his/her encrypted data to a cloud service provider, and can share the data with users possessing specific credentials (or attributes). However, the standard ABE system does not support secure deduplication, which is crucial for eliminating duplicate copies of identical data in order to save storage space and network bandwidth. In this paper, we present an attribute-based storage system with secure deduplication in a hybrid cloud setting, where a private cloud is responsible for duplicate detection and a public cloud manages the storage. Compared with the prior data deduplication systems, our system has two advantages. Firstly, it can be used to confidentially share data with users by specifying access policies rather than sharing decryption keys. Secondly, it achieves the standard notion of semantic security for data confidentiality while existing systems only achieve it by defining a weaker security notion.

IEEE 2017: A Collision-Mitigation Cuckoo Hashing Scheme for Large-scale Storage Systems

Abstract: With the rapid growth of the amount of information, cloud computing servers need to process and analyze large amounts of high-dimensional and unstructured data timely and accurately. This usually requires many query operations. Due to simplicity and ease of use, cuckoo hashing schemes have been widely used in real-world cloud-related applications. However due to the potential hash collisions, the cuckoo hashing suffers from endless loops and high insertion latency, even high risks of re-construction of entire hash table. In order to address these problems, we propose a cost-efficient cuckoo hashing scheme, called MinCounter. The idea behind MinCounter is to alleviate the occurrence of endless loops in the data insertion by selecting unbusy kicking-out routes. MinCounter selects the “cold” (infrequently accessed), rather than random, buckets to handle hash collisions. We further improve the concurrency of the MinCounter scheme to pursue higher performance and adapt to concurrent applications. MinCounter has the salient features of offering efficient insertion and query services and delivering high performance of cloud servers, as well as enhancing the experiences for cloud users. We have implemented MinCounter in a large-scale cloud test bed and examined the performance by using three real-world traces. Extensive experimental results demonstrate the efficacy and efficiency of MinCounter.

IEEE 2016: Reducing Fragmentation for In-line Deduplication Backup Storage via Exploiting Backup History and Cache Knowledge

Abstract: In backup systems, the chunks of each backup are physically scattered after deduplication, which causes a challenging fragmentation problem. We observe that the fragmentation comes into sparse and out-of-order containers. The sparse container decreases restore performance and garbage collection efficiency, while the out-of-order container decreases restore performance if the restore cache is small. In order to reduce the fragmentation, we propose History-Aware Rewriting algorithm (HAR) and Cache-Aware Filter (CAF). HAR exploits historical information in backup systems to accurately identify and reduce sparse containers, and CAF exploits restore cache knowledge to identify the out-of-order containers that hurt restore performance. CAF efficiently complements HAR in datasets where out-of-order containers are dominant. To reduce the metadata overhead of the garbage collection, we further propose a Container-Marker Algorithm (CMA) to identify valid containers instead of valid chunks. Our extensive experimental results from real-world datasets show HAR significantly improves the restore performance by 2.84-175.36 × at a cost of only rewriting 0.5-2.03 percent data.

IEEE 2016: Secure Data Sharing in Cloud Computing Using Revocable-Storage Identity-Based Encryption

Abstract: Cloud computing provides a flexible and convenient way for data sharing, which brings various benefits for both the society and individuals. But there exists a natural resistance for users to directly outsource the shared data to the cloud server since the data often contain valuable information. Thus, it is necessary to place cryptographically enhanced access control on the shared data. Identity-based encryption is a promising cryptographical primitive to build a practical data sharing system. However, access control is not static. That is, when some user’s authorization is expired, there should be a mechanism that can remove him/her from the system. Consequently, the revoked user cannot access both the previously and subsequently shared data. To this end, we propose a notion called revocable-storage identity-based encryption (RS-IBE), which can provide the forward/backward security of cipher text by introducing the functionalities of user revocation and cipher text update simultaneously. Furthermore, we present a concrete construction of RS-IBE, and prove its security in the defined security model. The performance comparisons indicate that the proposed RS-IBE scheme has advantages in terms of functionality and efficiency, and thus is feasible for a practical and cost-effective data-sharing system. Finally, we provide implementation results of the proposed scheme to demonstrate its practicability.

IEEE 2016: Key-Aggregate Searchable Encryption (KASE) for Group Data Sharing via Cloud Storage

Abstract: The capability of selectively sharing encrypted data with different users via public cloud storage may greatly ease security concerns over inadvertent data leaks in the cloud. A key challenge to designing such encryption schemes lies in the efficient management of encryption keys. The desired flexibility of sharing any group of selected documents with any group of users demands different encryption keys to be used for different documents. However, this also implies the necessity of securely distributing to users a large number of keys for both encryption and search, and those users will have to securely store the received keys, and submit an equally large number of keyword trapdoors to the cloud in order to perform search over the shared data. The implied need for secure communication, storage, and complexity clearly renders the approach impractical. In this paper, we address this practical problem, which is largely neglected in the literature, by proposing the novel concept of key-aggregate search able encryption and instantiating the concept through a concrete KASE scheme, in which a data owner only needs to distribute a single key to a user for sharing a large number of documents, and the user only needs to submit a single trapdoor to the cloud for querying the shared documents. The security analysis and performance evaluation both confirm that our proposed schemes are provably secure and practically efficient.

IEEE 2016: Public Integrity Auditing for Shared Dynamic Cloud Data with Group User Revocation

Abstract: The advent of the cloud computing makes storage outsourcing becomes a rising trend, which promotes the secure remote data auditing a hot topic that appeared in the research literature. Recently some research considers the problem of secure and efficient public data integrity auditing for shared dynamic data. However, these schemes are still not secure against the collusion of cloud storage server and revoked group users during user revocation in practical cloud storage system. In this paper, we figure out the collusion attack in the exiting scheme and provide an efficient public integrity auditing scheme with secure group user revocation based on vector commitment and verifier-local revocation group signature. We design a concrete scheme based on the our scheme definition. Our scheme supports the public checking and efficient user revocation and also some nice properties, such as confidently, efficiency, countability and traceability of secure group user revocation. Finally, the security and experimental analysis show that, compared with its relevant schemes our scheme is also secure and efficient.

IEEE 2016: Secure Auditing and Duplicating Data in Cloud

Abstract: As the cloud computing technology develops during the last decade outsourcing data to cloud service for storage becomes an attractive trend, which benefits in sparing efforts on heavy data maintenance and management. Nevertheless, since the outsourced cloud storage is not fully trustworthy, it raises security concerns on how to realize data deduplication in cloud while achieving integrity auditing. In this work, we study the problem of integrity auditing and secure deduplication on cloud data. Specifically, aiming at achieving both data integrity and deduplication in cloud, we propose two secure systems, namely Sec Cloud and Sec Cloud . Sec Cloud introduces an auditing entity with a maintenance of a Map Reduce cloud, which helps clients generate data tags before uploading as well as audit the integrity of data having been stored in cloud. Compared with previous work, the computation by user in Sec Cloud greatly reduced during the file uploading and auditing phases. Sec Cloud is designed motivated by the fact that customers always want to encrypt their data before uploading, and enables integrity auditing and secure deduplication on encrypted data.

IEEE 2015 : CHARM - A Cost-efficient Multi-cloud Data Hosting Scheme with High Availability

IEEE 2015 TRANSACTIONS ON COMPUTERS

IEEE 2015 : Innovative Schemes for Resource Allocation in the Cloud for Media Streaming Applications

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract :Media streaming applications have recently attracted a large number of users in the Internet. With the advent of these bandwidth-intensive applications, it is economically inefficient to provide streaming distribution with guaranteed QoS relying only on central resources at a media content provider. Cloud computing offers an elastic infrastructure that media content providers (e.g., Video on Demand (VoD) providers) can use to obtain streaming resources that match the demand. Media content providers are charged for the amount of resources allocated (reserved) in the cloud. Most of the existing cloud providers employ a pricing model for the reserved resources that is based on non-linear time-discount tariffs (e.g., Amazon CloudFront and Amazon EC2). Such a pricing scheme offers discount rates depending non-linearly on the period of time during which the resources are reserved in the cloud. In this case, an open problem is to decide on both the right amount of resources reserved in the cloud, and their reservation time such that the financial cost on the media content provider is minimized.We propose a simple - easy to implement - algorithm for resource reservation that maximally exploits discounted rates offered in the tariffs, while ensuring that sufficient resources are reserved in the cloud. Based on the prediction of demand for streaming capacity, our algorithm is carefully designed to reduce the risk of making wrong resource allocation decisions. The results of our numerical evaluations and simulations show that the proposed algorithm significantly reduces the monetary cost of resource allocations in the cloud as compared to other conventional schemes.

Abstract :Cloud Computing moves the application

software and databases

to the centralized large data centers, where the management of the data and services may not be fully trustworthy. In

this work, we study the problem of ensuring the integrity of data storage in Cloud Computing. To reduce the

computational cost at user side during the integrity verification of

their data, the notion of public verifiability has been proposed. However, the challenge is that the computational

burden is too huge for the users with resource-constrained devices to compute the public authentication tags of

file blocks. To tackle the challenge, we propose OPoR, a new cloud storage scheme involving a cloud storage server and

a cloud audit server, where the latter is assumed to be semi-honest. In particular, we consider the task of

allowing the cloud audit server, on behalf of the cloud users, to pre-process the data before uploading to the cloud

storage server and later verifying the data integrity. OPoR outsources the heavy computation of the tag generation to the

cloud audit server and eliminates the involvement of user in the auditing and in the preprocessing phases. Furthermore, we

strengthen the Proof of Retrievabiliy (PoR) model to support dynamic data operations, as well as

ensure security against reset attacks launched by the cloud storage server in the upload phase.

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : Data deduplication is a technique for eliminating duplicate copies of data, and

has been widely used in cloud storage to reduce storage space and upload

bandwidth. However, there is only one copy for each file stored in cloud even

if such a file is owned by a huge number of users. As a result, deduplication system

improves storage utilization while reducing reliability. Furthermore, the

challenge of privacy for sensitive data also arises when they are outsourced by

users to cloud. Aiming to address the above security challenges, this paper

makes the first attempt to formalize the notion of distributed reliable

deduplication system. We propose new distributed deduplication systems with

higher reliability in which the data chunks are distributed across multiple

cloud servers. The security requirements of data confidentiality and tag

consistency are also achieved by introducing a deterministic secret sharing

scheme in distributed storage systems, instead of using convergent encryption

as in previous deduplication systems. Security analysis demonstrates that our

deduplication systems are secure in terms of the definitions specified in the

proposed security model. As a proof of concept, we implement the proposed

systems and demonstrate that the incurred overhead is very limited in realistic

environments.

IEEE 2015 : Control Cloud Data Access Privilege and Anonymity With Fully Anonymous Attribute-Based Encryption

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract :Cloud

computing is a revolutionary computing paradigm, which

enables flexible, on-demand, and low-cost usage of computing

resources, but the data is outsourced to some cloud servers, and

various privacy concerns emerge from it. Various schemes based

on the attribute-based encryption have been proposed to

secure the cloud storage. However, most work focuses on the data

contents privacy and the access control, while less attention

is paid to the privilege control and the identity privacy. In

this paper, we present a semianonymous privilege control

scheme AnonyControl to address not only the data privacy, but

also the user identity privacy in existing access control schemes.

AnonyControl decentralizes the central authority to limit the identity

leakage and thus achieves semianonymity. Besides, it also generalizes the file

access control to the privilege control, by which

privileges of all operations on the cloud data can be managed in a

fine-grained manner. Subsequently, we present the

AnonyControl-F, which fully prevents the identity leakage and achieve

the full anonymity. Our security analysis shows that both

AnonyControl and AnonyControl-F are secure under the decisional

bilinear Diffie–Hellman assumption, and our performance

evaluation exhibits the feasibility of our schemes.

IEEE 2015 : Innovative Schemes for Resource Allocation in the Cloud for Media Streaming Applications

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract :Media streaming applications have recently attracted a large number of users in the Internet. With the advent of these bandwidth-intensive applications, it is economically inefficient to provide streaming distribution with guaranteed QoS relying only on central resources at a media content provider. Cloud computing offers an elastic infrastructure that media content providers (e.g., Video on Demand (VoD) providers) can use to obtain streaming resources that match the demand. Media content providers are charged for the amount of resources allocated (reserved) in the cloud. Most of the existing cloud providers employ a pricing model for the reserved resources that is based on non-linear time-discount tariffs (e.g., Amazon CloudFront and Amazon EC2). Such a pricing scheme offers discount rates depending non-linearly on the period of time during which the resources are reserved in the cloud. In this case, an open problem is to decide on both the right amount of resources reserved in the cloud, and their reservation time such that the financial cost on the media content provider is minimized.We propose a simple - easy to implement - algorithm for resource reservation that maximally exploits discounted rates offered in the tariffs, while ensuring that sufficient resources are reserved in the cloud. Based on the prediction of demand for streaming capacity, our algorithm is carefully designed to reduce the risk of making wrong resource allocation decisions. The results of our numerical evaluations and simulations show that the proposed algorithm significantly reduces the monetary cost of resource allocations in the cloud as compared to other conventional schemes.

IEEE 2015 : OPoR - Enabling Proof of Retrievability in Cloud Computing with Resource-Constrained Devices

IEEE 2015 TRANSACTIONS ON COMPUTERS

IEEE 2015 : Reducing Fragmentation for In-line Deduplication Backup Storage via Exploiting Backup History And Cache Knowledge

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : In backup systems, the chunks of each backup

are physically scattered after deduplication, which causes a challenging

fragmentation problem. We observe that the fragmentation comes into sparse and

out-of-order containers. The sparse container decreases restore performance and

garbage collection efficiency, while the out-of-order container decreases

restore performance if the restore cache is small. In order to reduce the

fragmentation, we propose History-Aware Rewriting algorithm (HAR) and

Cache-Aware Filter (CAF). HAR exploits historical information in backup systems

to accurately identify and reduce sparse containers, and CAF exploits restore

cache knowledge to identify the out-of-order containers that hurt restore

performance. CAF efficiently complements HAR in datasets where out-of-order

containers are dominant. To reduce the metadata overhead of the garbage

collection, we further propose a Container-Marker Algorithm (CMA) to identify

valid containers instead of valid chunks. Our extensive experimental results

from real-world datasets show HAR significantly improves the restore

performance by 2.84-175.36at a cost of only rewriting 0.5-2.03% data.

IEEE 2015 : Secure Distributed Deduplication Systems with Improved Reliability

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : Data deduplication is a technique for eliminating duplicate copies of data, and has been widely used in cloud storage to reduce storage space and upload bandwidth. However, there is only one copy for each file stored in cloud even if such a file is owned by a huge number of users. As a result, deduplication system improves storage utilization while reducing reliability. Furthermore, the challenge of privacy for sensitive data also arises when they are outsourced by users to cloud. Aiming to address the above security challenges, this paper makes the first attempt to formalize the notion of distributed reliable deduplication system. We propose new distributed deduplication systems with higher reliability in which the data chunks are distributed across multiple cloud servers. The security requirements of data confidentiality and tag consistency are also achieved by introducing a deterministic secret sharing scheme in distributed storage systems, instead of using convergent encryption as in previous deduplication systems. Security analysis demonstrates that our deduplication systems are secure in terms of the definitions specified in the proposed security model. As a proof of concept, we implement the proposed systems and demonstrate that the incurred overhead is very limited in realistic environments.

IEEE 2015 : Enabling Efficient Multi-Keyword Ranked Search Over Encrypted Mobile Cloud Data Through Blind Storage

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : In mobile cloud computing, a fundamental application is to outsource the mobile data to external cloud servers for scalable data storage. The outsourced data, however, need to be encrypted due to the privacy and con dentiality concerns of their owner. This results in the distinguished dif culties on the accurate search over the encrypted mobile cloud data. To tackle this issue, in this paper, we develop the searchable encryption for multi-keyword ranked search over the storage data. Speci cally, by considering the large number of outsourced documents (data) in the cloud, we utilize the relevance score and k-nearest neighbor techniques to develop an ef cient multi-keyword search scheme that can return the ranked search results based on the accuracy. Within this framework, we leverage an ef cient index to further improve the search ef ciency, and adopt the blind storage system to conceal access pattern of the search user. Security analysis demonstrates that our scheme can achieve con dentiality of documents and index, trapdoor privacy, trapdoor unlinkability, and concealing access pattern of the search user. Finally, using extensive simulations, we show that our proposal can achieve much improved ef ciency in terms of search functionality and search time compared with the existing proposals.

Governance Model for Cloud Computing in Building Information Management

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : The AEC (Architecture Engineering and Construction) sector is a highly fragmented, data intensive, project based industry, involving a number or very different professions and organisations. The industry’s strong data sharing and processing requirements means that the management of building data is complex and challenging.We present a data sharing capability utilising Cloud Computing, with two key contributions: 1) a governance model for building data, based on extensive research and industry consultation. This governance model describes how individual data artefacts within a building information model relate to each other and how access to this data is controlled; 2) a prototype implementation of this governance model, utilising the CometCloud autonomic cloud computing engine, using the Master/Work paradigm. This prototype is able to successfully store and manage building data, provide security based on a defined policy language and demonstrate scale-out in case of increasing demand or node failure. Our prototype is evaluated both qualitatively and quantitatively. To enable this evaluation we have integrated our prototype with the 3D modelling softwareVGoogle Sketchup. We also evaluate the prototype’s performance when scaling to utilise additional nodes in the Cloud and to determine its performance in case of node failures.

Secure Sensitive Data Sharing on a Big Data Platform

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract: Users store vast amounts of sensitive data on a big data platform. Sharing sensitive data will help enterprises reduce the cost of providing users with personalized services and provide value-added data services.However, secure data sharing is problematic. This paper proposes a framework for secure sensitive data sharing on a big data platform, including secure data delivery, storage, usage, and destruction on a semi-trusted big data sharing platform. We present a proxy re-encryption algorithm based on heterogeneous ciphertext transformation and a user process protection method based on a virtual machine monitor, which provides support for the realization of system functions. The framework protects the security of users’ sensitive data effectively and shares these data safely. At the same time, data owners retain complete control of their own data in a sound environment for modern Internet information security.

Just-in-Time Code Offloading for Wearable Computing

IEEE 2015 TRANSACTIONS ON COMPUTERS

ABSTRACT : Wearable computing becomes an emerging computing paradigm for various recently developed wearable devices, such as Google Glass and the Samsung Galaxy Smartwatch, which have signi cantly changed our daily life with new functions. To magnify the applications on wearable devices with limited computational capability, storage, and battery capacity, in this paper, we propose a novel three-layer architecture consisting of wearable devices, mobile devices, and a remote cloud for code of oad-ing. In particular, we of oad a portion of computation tasks from wearable devices to local mobile devices or remote cloud such that even applications with a heavy computation load can still be upheld on wearable devices. Furthermore, considering the special characteristics and the requirements of wearable devices, we investigate a code of oading strategy with a novel just-in-time objective, i.e., maximizing the number of tasks that should be executed on wearable devices with guaranteed delay requirements. Because of the NP-hardness of this problem as we prove, we propose a fast heuristic algorithm based on the genetic algorithm to solve it. Finally, extensive simulations are conducted to show that our proposed algorithm signi cantly outperforms the other three of loading strategies.

Panda: Public Auditing for Shared Data with Efficient User Revocation in the Cloud

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract—With data storage and sharing services in the cloud, users can easily modify and share data as a group. To ensure shared data integrity can be verified publicly, users in the group need to compute signatures on all the blocks in shared data. Different blocks in shared data are generally signed by different users due to data modifications performed by different users. For security reasons, once a user is revoked from the group, the blocks which were previously signed by this revoked user must be re-signed by an existing user. The straightforward method, which allows an existing user to download the corresponding part of shared data and re-sign it during user revocation, is inefficient due to the large size of shared data in the cloud. In this paper, we propose a novel public auditing mechanism for the integrity of shared data with efficient user revocation in mind. By utilizing the idea of proxy re-signatures, we allow the cloud to re-sign blocks on behalf of existing users during user revocation, so that existing users do not need to download and re-sign blocks by themselves. In addition, a public verifier is always able to audit the integrity of shared data without retrieving the entire data from the cloud, even if some part of shared data has been re-signed by the cloud. Moreover, our mechanism is able to support batch auditing by verifying multiple auditing tasks simultaneously. Experimental results show that our mechanism can significantly improve the efficiency of user revocation.

Toward Offering More Useful Data Reliably to Mobile Cloud From Wireless Sensor Network

IEEE 2015 TRANSACTIONS ON COMPUTERS

ABSTRACT : The integration of ubiquitous wireless sensor network (WSN) and powerful mobile cloud computing (MCC) is a research topic that is attracting growing interest in both academia and industry. In this new paradigm, WSN provides data to the cloud and mobile users request data from the cloud. To support applications involving WSN-MCC integration, which need to reliably offer data that are more useful to the mobile users from WSN to cloud, this paper rst identi es the critical issues that affect the usefulness of sensory data and the reliability of WSN, then proposes a novel WSN-MCC integration scheme named TPSS, which consists of two main parts: 1) time and priority-based selective data transmission (TPSDT) for WSN gateway to selectively transmit sensory data that are more useful to the cloud, considering the time and priority features of the data requested by the mobile user and 2) priority-based sleep scheduling (PSS) algorithm for WSN to save energy consumption so that it can gather and transmit data in a more reliable way. Analytical and experimental results demonstrate the effectiveness of TPSS in improving usefulness of sensory data and reliability of WSN for WSN-MCC integration.

On Traffic-Aware Partition and Aggregation in MapReduce for Big Data Applications

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : The MapReduce programming model simplifies large-scale data processing on commodity cluster by exploiting parallel map tasks and reduce tasks. Although many efforts have been made to improve the performance of MapReduce jobs, they ignore the network traffic generated in the shuffle phase, which plays a critical role in performance enhancement. Traditionally, a hash function is used to partition intermediate data among reduce tasks, which, however, is not traffic-efficient because network topology and data size associated with each key are not taken into consideration. In this paper, we study to reduce network traffic cost for a MapReduce job by designing a novel intermediate data partition scheme. Furthermore, we jointly consider the aggregator placement problem, where each aggregator can reduce merged traffic from multiple map tasks. A decomposition-based distributed algorithm is proposed to deal with the large-scale optimization problem for big data application and an online algorithm is also designed to adjust data partition and aggregation in a dynamic manner. Finally, extensive simulation results demonstrate that our proposals can significantly reduce network traffic cost under both offline and online cases.

IEEE 2015 : A Tree Regression Based Approach for VM Power Metering

IEEE 2015 TRANSACTIONS ON PARALLEL AND DISTRIBUTED SYSTEMS

Abstract : Cloud computing is developing so fast that more and more data centers have been built every year. This naturally leads to high power consumption. VM (Virtual Machine) consolidation is the most popular solution based on resource utilization. In fact, much more power can be saved if we know the power consumption of each VM. Therefore, it is significant to measure the power consumption of each VM for green cloud data centers. Since there is no device that can directly measure the power consumption of each VM, modeling methods have been proposed. However, current models are not accurate enough when multi-VMs are competing for resources on the same server. One of the main reasons is that the resource features for modeling are correlated with each other, such as CPU and cache. In this paper, we propose a tree regression based method to accurately measure the power consumption of VMs on the same host. The merits of this method are that the tree structure will split the dataset into partitions, and each is an easy-modeling subset. Experiments show that the average accuracy of our method is about 98% for different types of applications running in VMs.

IEEE 2015 : Cost-Minimizing Dynamic Migration of Content Distribution Services into Hybrid Clouds IEEE 2015 TRANSACTIONS ON PARALLEL AND DISTRIBUTED SYSTEMS

Abstract : The recent advent of cloud computing technologies has enabled agile and scalable resource access for a variety of applications. Content distribution services are a major category of popular Internet applications. A growing number of content providers are contemplating a switch to cloud-based services, for better scalability and lower cost. Two key tasks are involved for such a move: to migrate their contents to cloud storage, and to distribute their web service load to cloud-based web services. The main challenge is to make the best use of the cloud as well as their existing on-premise server infrastructure, to serve volatile content requests with service response time guarantee at all times, while incurring the minimum operational cost. Employing Lyapunov optimization techniques, we present an optimization framework for dynamic, cost-minimizing migration of content distribution services into a hybrid cloud infrastructure that spans geographically distributed data centers. A dynamic control algorithm is designed, which optimally places contents and dispatches requests in different data centers to minimize overall operational cost over time, subject to service response time constraints. Rigorous analysis shows that the algorithm nicely bounds the response times within the preset QoS target in cases of arbitrary request arrival patterns, and guarantees that the overall cost is within a small constant gap from the optimum achieved by a T-slot look ahead mechanism with known information into the future.

IEEE 2015 :A Combinatorial Auction-Based Collaborative Cloud Services Platform

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract: In this paper,

we introduce a combinatorial auction-based market model to enable

Cloud Service Providers (CSPs) to satisfy complex user requirements

collaboratively, where the CSPs are connected in a

social network and communication costs among them cannot be ignored.

However, in many situations CSPs may lie about their private information

in order to maximize their earnings. Therefore, we combine the combinatorial

auction with the VCG-auction mechanism to ensure that CSPs do not lie in

auction. Based on above market model, we construct a collaborative cloud

platform, which is divided into three layers: The user-layer receives requests

from end-users, the auction-layer matches the requests with the cloud

services provided by the Cloud Service Provider (CSP), and the CSP-layer

forms a coalition to improve serving ability to satisfy complex requirements of

users. In fact, the aim of the coalition formation is to find suitable

partners for a particular CSP, and, we propose two heuristic

algorithms for the coalition formation. The Breadth Traversal Algorithm

(BTA) and Revised Ant Colony Algorithm (RACA) are proposed to form a

coalition when bidding for a single cloud service in the auction. The

experimental results show that RACA outperforms the BTA in bid price and

our methods work well compared to the existing auction-based method in terms of

economic efficiency. Other experiments were conducted to evaluate the impact of

the communication cost on coalition formation and to assess the impact of

iteration times for the optimal bidding price.

IEEE 2015 : Secure Optimization Computation Outsourcing in Cloud Computing: A Case Study of Linear Programming

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : Cloud computing enables an economically promising paradigm of computation outsourcing. However, how to protect customers confidential data processed and generated during the computation is becoming the major security concern. Focusing on engineering computing and optimization tasks, this paper investigates secure outsourcing of widely applicable linear programming (LP) computations. Our mechanism design explicitly decomposes LP computation outsourcing into public LP solvers running on the cloud and private LP parameters owned by the customer. The resulting flexibility allows us to explore appropriate security/efficiency trade off via higher-level abstraction of LP computation than the general circuit representation. Specifically, by formulating private LP problem as a set of matrices/vectors, we develop efficient privacy-preserving problem transformation techniques, which allow customers to transform the original LP into some random one while protecting sensitive input/output information. To validate the computation result, we further explore the fundamental duality theorem of LP and derive the necessary and sufficient conditions that correct results must satisfy. Such result verification mechanism is very efficient and incurs close-to-zero additional cost on both cloud server and customers. Extensive security analysis and experiment results show the immediate practicability of our mechanism design.

IEEE 2015 : Provable Multi copy Dynamic Data Possession in Cloud Computing System

IEEE 2015 TRANSACTIONS ON INFORMATION FORENSICS AND SECURITY

Abstract : Increasingly more and more organizations are opting for outsourcing data to remote cloud service provi-ders (CSPs). Customers can rent the CSPs storage infrastructure to store and retrieve almost unlimited amount of data by paying fees metered in gigabyte/month. For an increased level of scalability, availability, and durability, some customers may want their data to be replicated on multiple servers across multiple data centers. The more copies the CSP is asked to store, the more fees the customers are charged. Therefore, customers need to have a strong guarantee that the CSP is storing all data copies that are agreed upon in the service contract, and all these copies are consistent with the most recent modifications issued by the customers. In this paper, we propose a map-based provable mul-ticopy dynamic data possession (MB-PMDDP) scheme that has the following features: 1) it provides an evidence to the customers that the CSP is not cheating by storing fewer copies; 2) it supports outsourcing of dynamic data, i.e., it supports block-level opera-tions, such as block modification, insertion, deletion, and append; and 3) it allows authorized users to seamlessly access the file copies stored by the CSP. We give a comparative analysis of the proposed MB-PMDDP scheme with a reference model obtained by extending existing provable possession of dynamic single-copy schemes. The theoretical analysis is validated through experimental results on a commercial cloud platform. In addition, we show the security against colluding servers, and discuss how to identify corrupted copies by slightly modifying the proposed scheme.

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : The capability of selectively sharing encrypted data with different users via public cloud storage may greatly ease security concerns over inadvertent data leaks in the cloud. A key challenge to designing such encryption schemes lies in the efficient management of encryption keys. The desired flexibility of sharing any group of selected documents with any group of users demands different encryption keys to be used for different documents. However, this also implies the necessity of securely distributing to users a large number of keys for both encryption and search, and those users will have to securely store the received keys, and submit an equally large number of keyword trapdoors to the cloud in order to perform search over the shared data. The implied need for secure communication, storage, and complexity clearly renders the approach impractical. In this paper, we address this practical problem, which is largely neglected in the literature, by proposing the novel concept of key-aggregate searchable encryption (KASE) and instantiating the concept through a concrete KASE scheme, in which a data owner only needs to distribute a single key to a user for sharing a large number of documents, and the user only needs to submit a single trapdoor to the cloud for querying the shared documents. The security analysis and performance evaluation both confirm that our proposed schemes are provably secure and practically efficient.

IEEE 2015 TRANSACTIONS ON COMPUTERS

Abstract : The advent of the cloud computing makes storage out-sourcing become a rising trend, which promotes the secure remote data auditing a hot topic that appeared in the research literature. Recently some research consider the problem of secure and efficient public data integrity auditing for shared dynamic data. However, these schemes are still not secure against the collusion of cloud storage server and revoked group users during user revocation in practical cloud storage system. In this paper, we figure out the collusion attack in the exiting scheme and provide an efficient public integrity auditing scheme with secure group user revocation based on vector commitment and verifier-local revocation group signature. We design a concrete scheme based on the our scheme definition. Our scheme supports the public checking and efficient user revoca-tion and also some nice properties, such as confidently, efficiency, countability and traceability of secure group user revocation. Finally, the security and experimental analysis show that, compared with its relevant schemes our scheme is also secure and efficient.

IEEE 2015 :Energy-aware Load Balancing and Application Scaling for the Cloud Ecosystem

IEEE 2015 TRANSACTIONS ON CLOUD COMPUTING

Abstract : In this paper we introduce an energy-aware operation model used for load balancing and application scaling on a cloud. The basic philosophy of our approach is de ning an energy-optimal operation regime and attempting to maximize the number of servers operating in this regime. Idle and lightly-loaded servers are switched to one of the sleep states to save energy. The load balancing and scaling algorithms also exploit some of the most desirable features of server consolidation mechanisms discussed in the literature.

IEEE 2015 : Shared Authority Based Privacy-preserving

Authentication Protocol in Cloud Computing

IEEE 2015 TRANSACTIONS ON PARALLEL AND DISTRIBUTED SYSTEMS

Abstract: Cloud computing is emerging as a prevalent data interactive paradigm to realize users’ data remotely stored in an online cloud server. Cloud services provide great conveniences for the users to enjoy the on-demand cloud applications without considering the local infrastructure limitations. During the data accessing, different users may be in a collaborative relationship, and thus data sharing becomes significant to achieve productive benefits. The existing security solutions mainly focus on the authentication to realize that a user’s privative data cannot be unauthorized accessed, but neglect a subtle privacy issue during a user challenging the cloud server to request other users for data sharing. The challenged access request itself may reveal the user’s privacy no matter whether or not it can obtain the data access permissions. In this paper, we propose a shared authority based privacy-preserving authentication protocol (SAPA) to address above privacy issue for cloud storage. In the SAPA, 1) shared access authority is achieved by anonymous access request matching mechanism with security and privacy considerations (e.g., authentication, data anonymity, user privacy, and forward security); 2) attribute based access control is adopted to realize that the user can only access its own data fields; 3) proxy re -encryption is applied by the cloud server to provide data sharing among the multiple users. Meanwhile, universal compos ability (UC) model is established to prove that the SAPA theoretically ha s the design correctness. It indicates that the proposed protocol realizing privacy-preserving data access authority sharing, is attractive for multi-user collaborative cloud applications.

IEEE 2015 : I-sieve - An Inline High

Performance Deduplication System Used in Cloud

Storage

IEEE 2015 TRANSACTIONS ON SERVICE

COMPUTING

Abstract :Data

deduplication is an emerging and widely employed method for current storage

systems. As this technology is gradually applied in inline scenarios such as

with virtual machines and cloud storage systems, this study proposes a novel

deduplication architecture called I-sieve. The goal of I-sieve is to

realize a high performance data sieve system based on iSCSI in the cloud

storage system. We also design the corresponding index and mapping tables and

present a multi-level cache using a solid state drive to reduce RAM consumption

and to optimize lookup performance. A prototype of I-sieve is implemented

based on the open source iSCSI target, and many experiments have been conducted

driven by virtual machine images and testing tools. The evaluation results show

excellent deduplication and foreground performance.More importantly, I-sieve

can co-exist with the existing deduplication systems as long as they support

the iSCSI protocol.

IEEE 2015 : Secure and Practical

Outsourcing of Linear Programming

in Cloud Computing

IEEE 2015 TRANSACTIONS ON SERVICE

COMPUTING

Abstract :Cloud

Computing has great potential of providing robust computational power to the

society at reduced cost. It enables customers with limited computational

resources to outsource their large computation workloads to the cloud, and

economically enjoy the massive computational power, bandwidth, storage, and

even appropriate software that can be shared in a pay-per-use manner. Despite

the tremendous benefits, security is the primary obstacle that prevents

the wide adoption of this promising computing model, especially for customers

when their confidential data are consumed and produced during the computation.

Treating the cloud as an intrinsically insecure computing platform from

the viewpoint of the cloud customers, we must design mechanisms that not

only protect sensitive information by enabling computations with encrypted

data, but also protect customers from malicious behaviors by enabling the

validation of the computation result. Such a mechanism of general secure

computation outsourcing was recently shown to be feasible in theory, but

to design mechanisms that are practically efficient remains a very

challenging problem. Focusing on engineering computing and optimization

tasks, this paper investigates secure outsourcing of widely applicable linear

programming (LP) computations. In order to achieve practical efficiency,

our mechanism design explicitly decomposes the LP computation outsourcing

into public LP solvers running on the cloud and private LP parameters

owned by the customer. The resulting flexibility allows us to explore

appropriate security/ efficiency trade off via higher-level abstraction of

LP computations than the general circuit representation. In particular,

by formulating private data owned by the customer for LP problem as a

set of matrices and vectors, we are able to develop a set of efficient

privacy-preserving problem transformation techniques, which allow

customers to transform original LP problem into some arbitrary one while

protecting sensitive input/output information. To validate the computation

result, we further explore the fundamental duality theorem of LP

computation and derive the necessary and sufficient conditions that

correct result must satisfy. Such result verification mechanism is

extremely efficient and incurs close-to-zero additional cost on both cloud

server and customers. Extensive security analysis and experiment results show

the immediate practicability of our mechanism design.

IEEE 2015 : A Profit Maximization Scheme with Guaranteed Quality of

Service in Cloud Computing

IEEE 2015 TRANSACTIONS ON SERVICE COMPUTING

Abstract : As

an effective and efficient way to provide computing resources and services to

customers on demand, cloud computing has become more and more popular. From

cloud service providers’ perspective, profit is one of the most important

considerations, and it is mainly determined by the configuration of a cloud

service platform under given market demand. However, a single long-term renting

scheme is usually adopted to configure a cloud platform, which cannot guarantee

the service quality but leads to serious resource waste. In this paper, a

double resource renting scheme is designed firstly in which short-term renting

and long-term renting are combined aiming at the existing issues. This double

renting scheme can effectively guarantee the quality of service of all requests

and reduce the resource waste greatly. Secondly, a service system is considered

as an M/M/m+D queuing model and the performance indicators that affect

the profit of our double renting scheme are analyzed, e.g., the average charge,

the ratio of requests that need temporary servers, and so forth. Thirdly, a

profit maximization problem is formulated for the double renting scheme and the

optimized configuration of a cloud platform is obtained proposed scheme with

that of the single renting scheme. The results show that our scheme can not

only guarantee the service quality of all requests, but also obtain more profit

than the latter. by solving the profit maximization problem. Finally, a series

of calculations are conducted to compare the profit of our proposed scheme with

that of the single renting scheme.

IEEE 2015 :Key-Aggregate Searchable

Encryption (KASE) for Group Data Sharing via Cloud Storage

IEEE 2015 TRANSACTIONS ON SERVICE COMPUTING

Abstract : The capability of selectively sharing encrypted data with

different users via public cloud storage may greatly ease security concerns

over inadvertent data leaks in the cloud. A key challenge to designing such

encryption schemes lies in the efficient management of encryption keys. The

desired flexibility of sharing any group of selected documents with any group

of users demands different encryption keys to be used for different documents.

However, this also implies the necessity of securely distributing to users a

large number of keys for both encryption and search, and those users will have

to securely store the received keys, and submit an equally large number of

keyword trapdoors to the cloud in order to perform search over the shared data.

The implied need for secure communication, storage, and complexity clearly

renders the approach impractical. In this paper, we address this practical

problem, which is largely neglected in the literature, by proposing the novel

concept of key aggregate searchable encryption (KASE) and instantiating

the concept through a concrete KASE scheme, in which a data owner only needs to

distribute a single key to a user for sharing a large number of documents, and

the user only needs to submit a single trapdoor to the cloud for querying the

shared documents. The security analysis and performance evaluation both confirm that our proposed schemes are provably secure and practice ally efficient.

IEEE 2015 : Secure Auditing and Deduplicating Data in Cloud

IEEE 2015 TRANSACTIONS ON SERVICE COMPUTING

IEEE 2015 TRANSACTIONS ON SERVICE COMPUTING

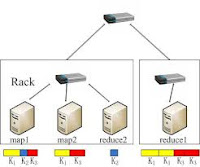

Abstract :As

the cloud computing technology develops during the last decade, outsourcing

data to cloud service for storage becomes an attractive trend, which benefits

in sparing efforts on heavy data maintenance and management. Nevertheless,

since the outsourced cloud storage is not fully trustworthy, it raises security

concerns on how to realize data deduplication in cloud while achieving integrity

auditing. In this work, we study the problem of integrity auditing and secure

deduplication on cloud data. Specifically, aiming at achieving both data

integrity and deduplication in cloud, we propose two secure systems, namely

SecCloud and SecCloud+. SecCloud introduces an auditing entity with a

maintenance of a MapReduce cloud, which helps clients generate data tags before

uploading as well as audit the integrity of data having been stored in cloud.

Compared with previous work, the computation by user in SecCloud is greatly

reduced during the file uploading and auditing phases. SecCloud+ is designed

motivated by the fact that customers always want to encrypt their data before

uploading, and enables integrity auditing and secure deduplication on encrypted

data.

IEEE 2014 : Fog

Computing: Mitigating Insider Data Theft Attacks in the Cloud

IEEE 2014 TRANSACTIONS ON SERVICE COMPUTING

Abstract :Cloud computing promises to significantly change the way we use computers

and access and store our personal and business information. With these new

computing and communications paradigms arise new data security challenges. Existing

data protection mechanisms such as encryption have failed in preventing data

theft attacks, especially those perpetrated by an insider to the cloud

provider. We propose a different approach for securing data in the cloud using

offensive decoy technology. We monitor data access in the cloud and detect

abnormal data access patterns. When unauthorized access is suspected and then

verified using challenge questions, we launch a disinformation attack by

returning large amounts of decoy information to the attacker. This protects

against the misuse of the user’s real data. Experiments conducted in a local

file setting provide evidence that this approach may provide unprecedented

levels of user data security in a Cloud environment.

IEEE 2014 :Se Das: A Self-Destructing

Data System Based on Active Storage Framework

IEEE 2014 TRANSACTIONS ON SERVICE COMPUTING

Abstract :Personal data stored in the Cloud may contain

account numbers, passwords, notes, and other important information that could

be used and misused by a miscreant, a competitor, or a court of law. These data

are cached, copied, and archived by Cloud Service Providers (CSPs), often

without users’ authorization and control. Self-destructing data mainly aims at

protecting the user data’s privacy. All the data and their copies become

destructed or unreadable after a user-specified time, without any user

intervention. In addition, the decryption key is destructed after the

user-specified time. In this paper, we present Se Das, a system that meets this

challenge through a novel integration of cryptographic techniques with active

storage techniques based on T10 OSD standard. We implemented a proof-of-concept

Se Das prototype. Through functionality and security properties evaluations of

the Se Das prototype, the results demonstrate that Se Das is practical to use

and meets all the privacy-preserving goals described. Compared to the system

without self-destructing data mechanism, throughput for uploading and

downloading with the proposed Se Das acceptably decreases by less than 72%,

while latency for upload/download operations with self-destructing data

mechanism increases by less than 60%.

IEEE 2014 :AMES-Cloud: A

Framework of Adaptive Mobile Video Streaming and Efficient Social Video Sharing

in the Clouds

IEEE 2014 TRANSACTIONS ON SERVICE

COMPUTING

Abstract :While

demands on video traffic over mobile networks have been souring, the wireless

link capacity cannot keep up with the traffic demand. The gap between the

traffic demand and the link capacity, along with time-varying link conditions,

results in poor service quality of video streaming over mobile networks such as

long buffering time and intermittent disruptions. Leveraging the cloud

computing technology, we propose a new mobile video streaming framework, dubbed

AMES-Cloud, which has two main parts: adaptive mobile video streaming (AMoV)

and efficient social video sharing (ESoV). A MoV and ESoV construct a private

agent to provide video streaming services efficiently for each mobile user. For

a given user, A MoV lets her private agent adaptively adjust her streaming flow

with a scalable video coding technique based on the feedback of link quality.

Likewise, ESo V monitors the social network interactions among mobile users,

and their private agents try to prefetch video content in advance. We implement

a prototype of the AMES-Cloud framework to demonstrate its performance. It is

shown that the private agents in the clouds can effectively provide the

adaptive streaming, and perform video sharing (i.e., prefetching) based on the

social network analysis.

IEEE 2014 : Cloud-Assisted

Mobile-Access of Health Data With Privacy and Auditability

IEEE

JOURNAL OF BIOMEDICAL AND HEALTH INFORMATICS

Abstract :Motivated by

the privacy issues, curbing the adoption of electronic healthcare systems and

the wild success of cloud service models, we propose to build privacy into

mobile healthcare systems with the help of the private cloud. Our system offers

salient features including efficient key management, privacy-preserving data

storage, and retrieval, especially for retrieval at emergencies, and audit

ability for misusing health data. Specifically, we propose to integrate key

management from pseudorandom number generator for unlink ability, a secure

indexing method for privacy preserving keyword search which hides both search

and access patterns based on redundancy, and integrate the concept of attribute

based encryption with threshold signing for providing role-based access control

with audit ability to prevent potential misbehavior, in both normal and

emergency cases.

IEEE 2014 : VABKS - Verifiable Attribute-based Keyword Search over Outsourced Encrypted Data

Abstract :It is common nowadays for

data owners to outsource their data to the cloud. Since the cloud cannot be

fully trusted, the outsourced data should be encrypted. This however brings a

range of problems, such as: How should a data owner grant search capabilities

to the data users? How can the authorized data users search over a data owner’s

outsourced encrypted data? How can the data users be assured that the cloud

faithfully executed the search operations on their behalf? Motivated by these

questions, we propose a novel cryptographic solution, called verifiable

attribute-based keyword search (VABKS). The solution allows a data user, whose

credentials satisfy a data owner’s access control policy, to (i) search over

the data owner’s outsourced encrypted data, (ii) outsource the tedious search

operations to the cloud, and (iii) verify whether the cloud has faithfully

executed the search operations. We formally define the security requirements of

VABKS and describe a construction that satisfies them. Performance evaluation

shows that the proposed schemes are practical and deployable.

IEEE 2014 : Securing Broker-Less

Publish/Subscribe Systems Using

Identity-Based Encryption

IEEE 2014

TRANSACTIONS ON PARALLEL AND DISTRIBUTED SYSTEMS

Abstract :The

provisioning of basic security mechanisms such as authentication and

confidentiality is highly challenging in a content based publish/subscribe

system. Authentication of publishers and subscribers is difficult to achieve

due to the loose coupling of publishers and subscribers. Likewise,

confidentiality of events and subscriptions conflicts with content-based

routing. This paper presents a novel approach to provide confidentiality and

authentication in a broker-less content-based publish/subscribe system. The

authentication of publishers and subscribers as well as confidentiality of

events is ensured, by adapting the pairing-based cryptography mechanisms, to

the needs of a publish/subscribe system. Furthermore, an algorithm to cluster

subscribers according to their subscriptions preserves a weak notion of

subscription confidentiality. In addition to our previous work, this paper

contributes 1) use of searchable encryption to enable efficient routing of

encrypted events, 2) multicredential routing a new event dissemination strategy

to strengthen the weak subscription confidentiality, and 3) thorough analysis

of different attacks on subscription confidentiality. The overall approach

provides fine-grained key management and the cost for encryption, decryption,

and routing is in the order of subscribed attributes. Moreover, the evaluations

show that providing security is affordable w.r.t. 1) throughput of the proposed

cryptographic primitives, and 2) delays incurred during the construction of the

publish/subscribe overlay and the event dissemination. Security requirements of

VABKS and describe a construction that satisfies them. Performance evaluation

shows that the proposed schemes are practical and deployable.

IEEE 2014 : A Secure Client Side De duplication Scheme in Cloud Storage Environments

IEEE 2014: 6th International Conference on New Technologies, Mobility and Security (NTMS)

Abstract :Recent years have witnessed the trend of leveraging cloud-based services for large scale content storage, processing, and distribution. Security and privacy are among top concerns for the public cloud environments. Towards these security challenges, we propose and implement, on Open Stack Swift, a new client-side deduplication scheme for securely storing and sharing outsourced data via the public cloud. The originality of our proposal is twofold. First, it ensures better confidentiality towards unauthorized users. That is, every client computes a per data key to encrypt the data that he intends to store in the cloud. As such, the data access is managed by the data owner. Second, by integrating access rights in metadata file, an authorized user can decipher an encrypted file only with his private key.

Abstract :Recent years have witnessed the trend of leveraging cloud-based services for large scale content storage, processing, and distribution. Security and privacy are among top concerns for the public cloud environments. Towards these security challenges, we propose and implement, on Open Stack Swift, a new client-side deduplication scheme for securely storing and sharing outsourced data via the public cloud. The originality of our proposal is twofold. First, it ensures better confidentiality towards unauthorized users. That is, every client computes a per data key to encrypt the data that he intends to store in the cloud. As such, the data access is managed by the data owner. Second, by integrating access rights in metadata file, an authorized user can decipher an encrypted file only with his private key.

IEEE 2014 : Attribute Based Encryption with Privacy Preserving In Clouds

IEEE 2014 TRANSACTIONS ON KNOWLEDGE & DATA ENGINEERING

IEEE 2014 TRANSACTIONS ON KNOWLEDGE & DATA ENGINEERING

Abstract : Security and privacy are very important issues in cloud computing. In existing system access control in clouds are centralized in nature. The scheme uses a symmetric key approach and does not support authentication. Symmetric key algorithm uses same key for both encryption and decryption. The authors take a centralized approach where a single key distribution center (KDC) distributes secret keys and attributes to all users. A new decentralized access control scheme for secure data storage in clouds that supports anonymous authentication. The validity of the user who stores the data is also verified. The proposed scheme is resilient to replay attacks. In this scheme using Secure Hash algorithm for authentication purpose, SHA is the one of several cryptographic hash functions, most often used to verify that a file has been unaltered. The Paillier crypto system is a probabilistic asymmetric algorithm for public key cryptography. Pailier algorithm use for Creation of access policy, file accessing and file restoring process.

IEEE 2014 : Oruta - privacy-preserving public auditing for shared data in the cloud

IEEE 2014 Transactions on Cloud Computing